Why does EDR matter?

Laptops and PCs are an important point of entry for cyber attackers because they are operated by humans that can be easily tricked into clicking on malicious links or email attachments. When those laptops and PCs are used in an enterprise, they are called endpoints and often run special software to mitigate cyber threats.

Which EDR performs best?

Thanks to MITRE, a US non-profit organization, we can now compare the performance of various Endpoint Detection and Response solutions. This evaluation is unique because it puts a well-documented cyber threat in a lab environment and tracks detection throughout the attack path. MITRE published the results but deliberately without ranking, scoring or rating. Make up your own mind.

This is MITRE. They solve problems, and apparently, they're not afraid to point out that "cyber" is the fifth domain in warfare besides land, sea, air, and space.

In the cyber domain they’re famous for creating the MITRE ATT&CK matrix, an information product that helps organizations think about their cyber defense in a more attacker-oriented way: from initial access via privilege escalation and lateral movement to impact.

We approve of us!

MITRE doesn't assign scores in their EDR evaluation, and in this ranking vacuum, you can only imagine what most vendors did:

- "Carbon Black outperforms all other EDR solutions" (source)

- "CounterTack Platform leads with fast automated detections" (source)

- "CrowdStrike Falcon […] the most effective EDR solution" (source)

- "[…] Endgame as the first zero training endpoint protection" (source)

- "FireEye Endpoint Security […] the most effective EDR solution" (source)

- "[…] evaluation showcases the effectiveness of SentinelOne’s platform" (source)

- "[…] Windows Defender ATP demonstrated industry-leading optics […]" (source)

- "[…] Cybereason best enables defenders […]" (source)

- "Cortex XDR and Traps Outperform in MITRE Evaluation" (source)

What are the results?

Loading the evaluation results into Splunk, via this Python script, leads to the charts below.

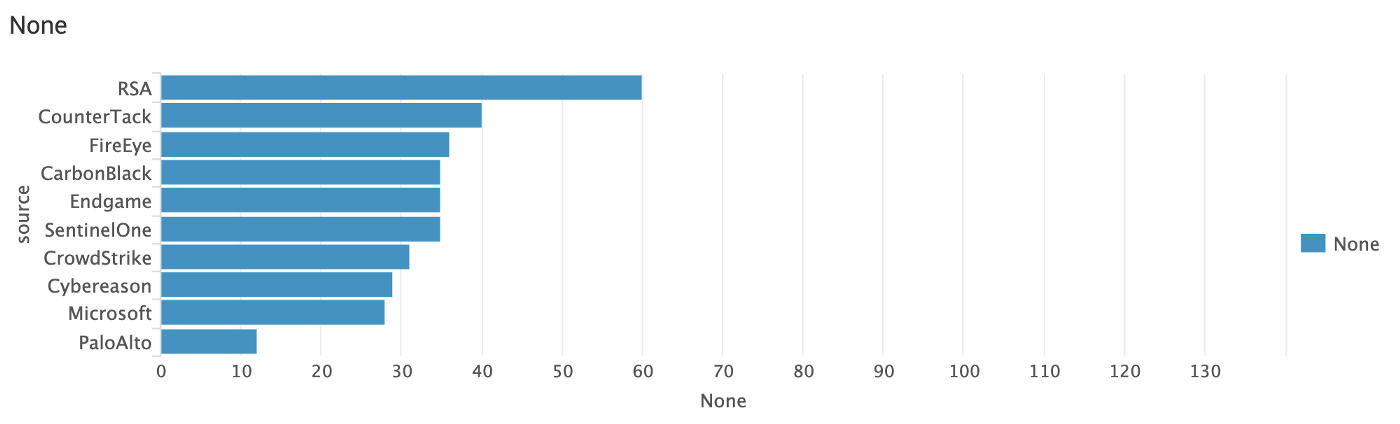

The evaluation simulated 136 steps of an advanced persistent threat. For example the first chart shows that 60 attacker steps on a total of 136 weren’t detected by the chart leader of main detection type "None". You can read up on the main detection types here.

Who detected the least APT3 steps?

It may not be a coincidence that RSA is missing from the cheering press release crowd.

Which EDR system logs most telemetry?

If you just want the logs and create your own rules in a SIEM, look no further than this chart (or Sysmon)

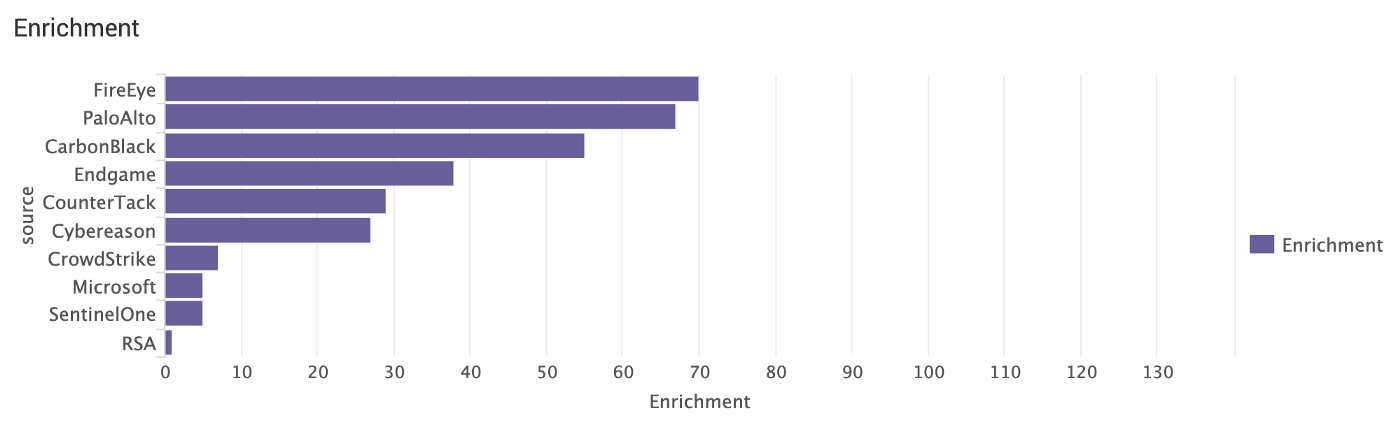

Who enriches events the most?

Enrichment is telemetry++.

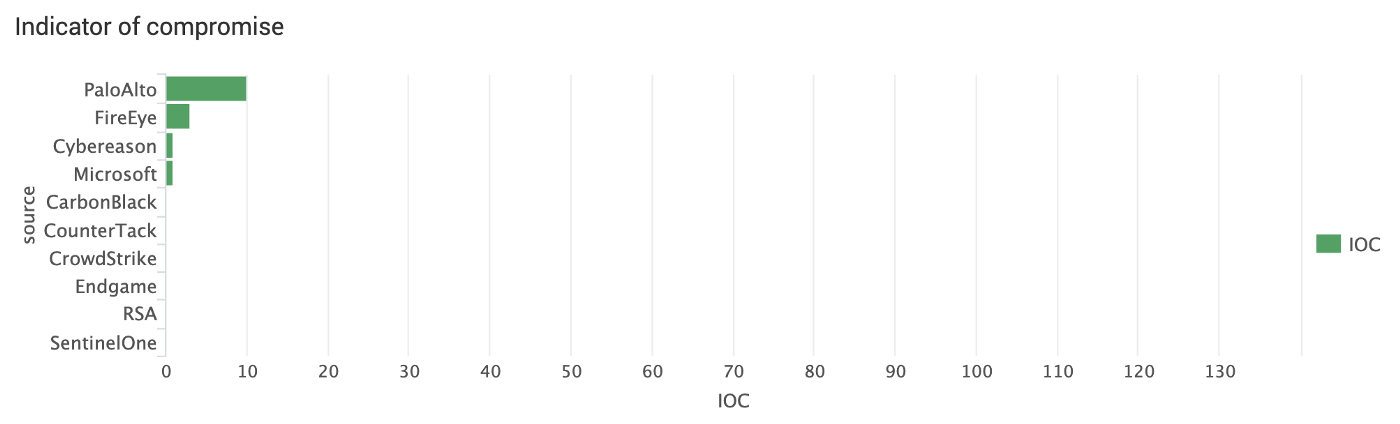

Whose IOC triggered on APT3?

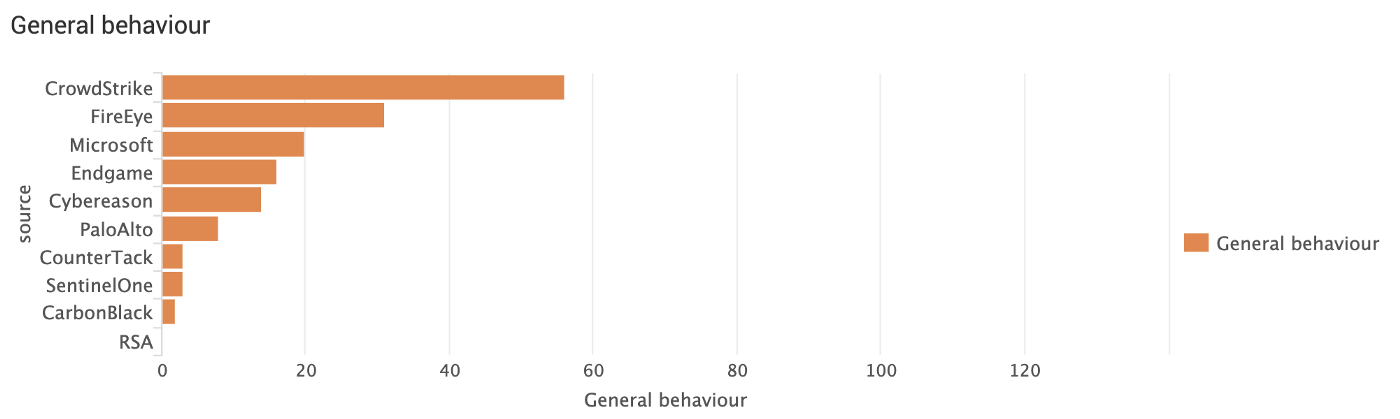

Who detects most general attacker behaviour?

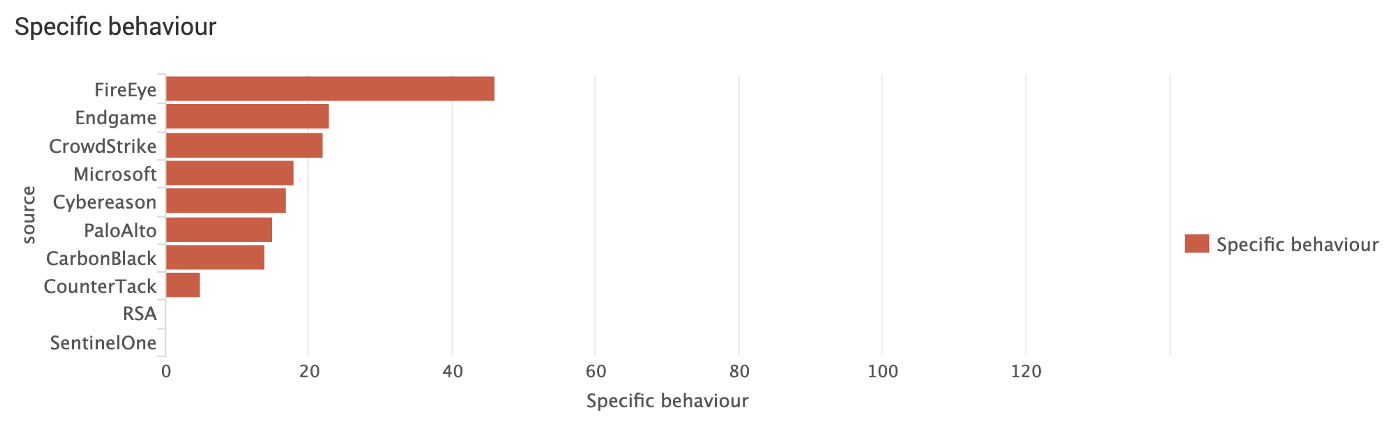

Who detects most specific attacker behaviour?

Another dimension

Three other modifiers are included in the EDR evaluation. Read up on them here.

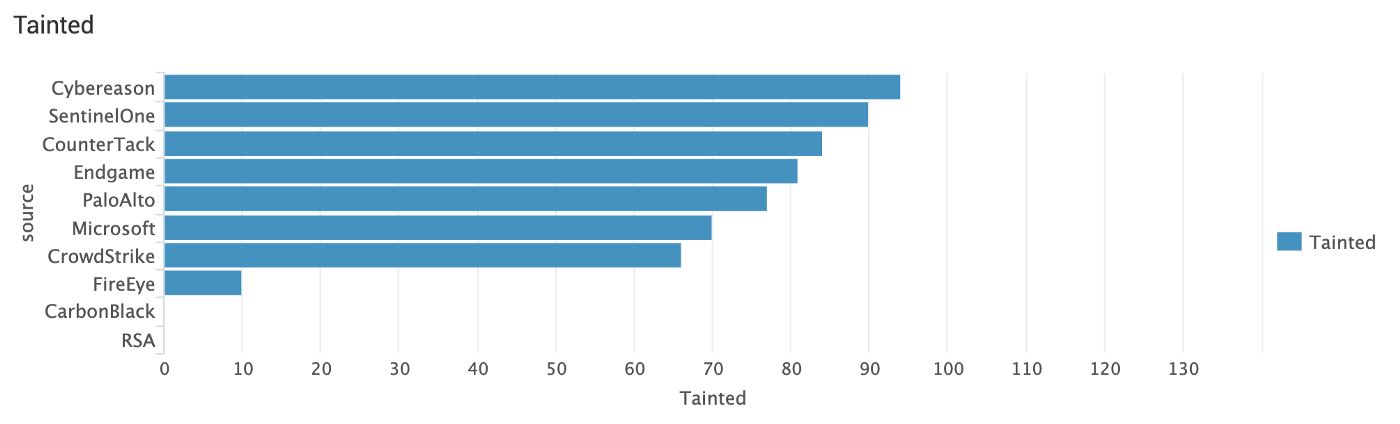

Tainted

Tainted means correlated, which is helpful for analysts.

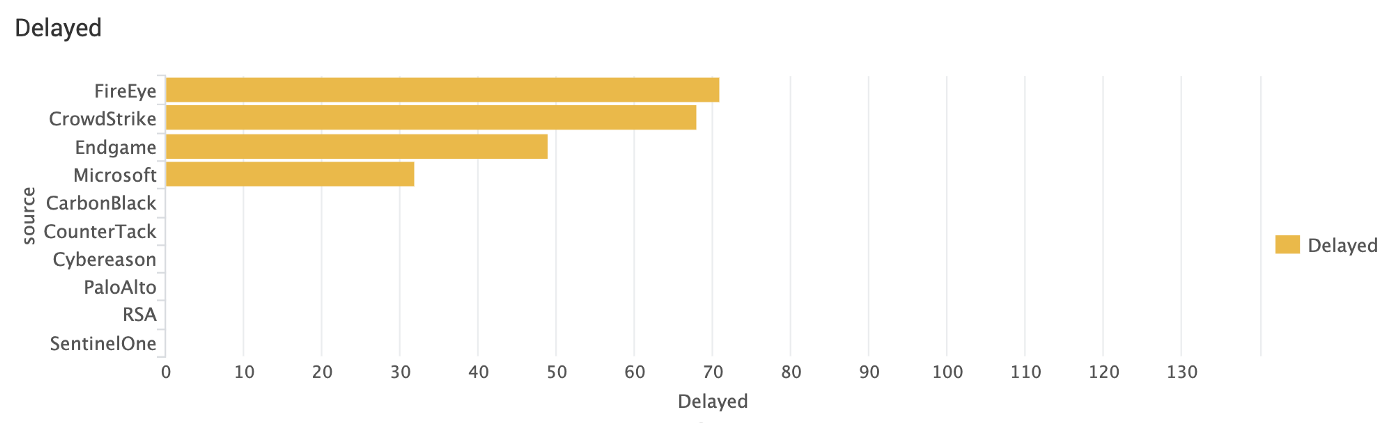

Delayed

This means non-realtime.

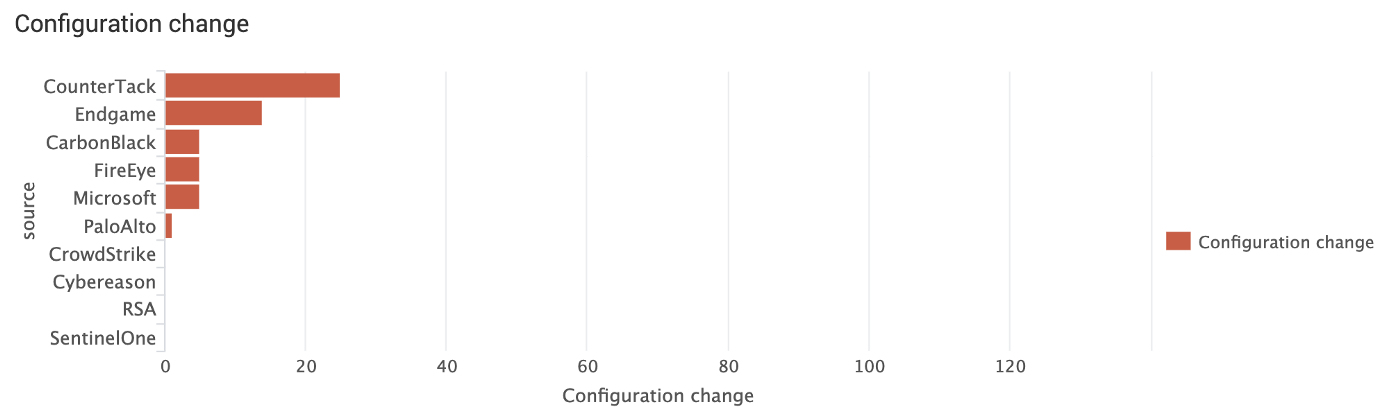

Configuration change

This means APT3 steps were detected, but only after special vendor assistance.

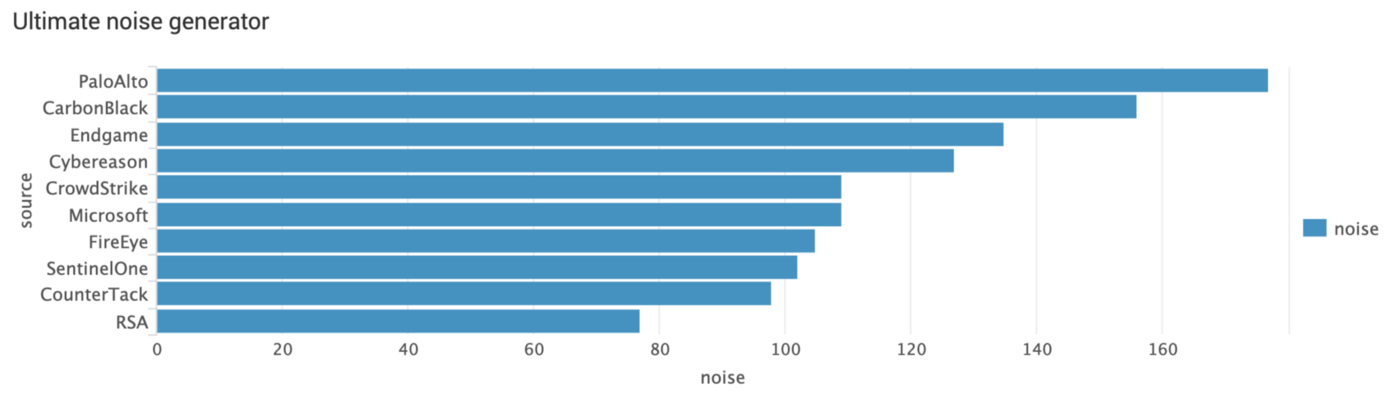

Which EDR is most suitable as ultimate noise generator?

If you only care about raw data and do correlation on your own outside of the EDR solution, this chart ranks vendors based on Telemetry, Enrichment and IOC results:

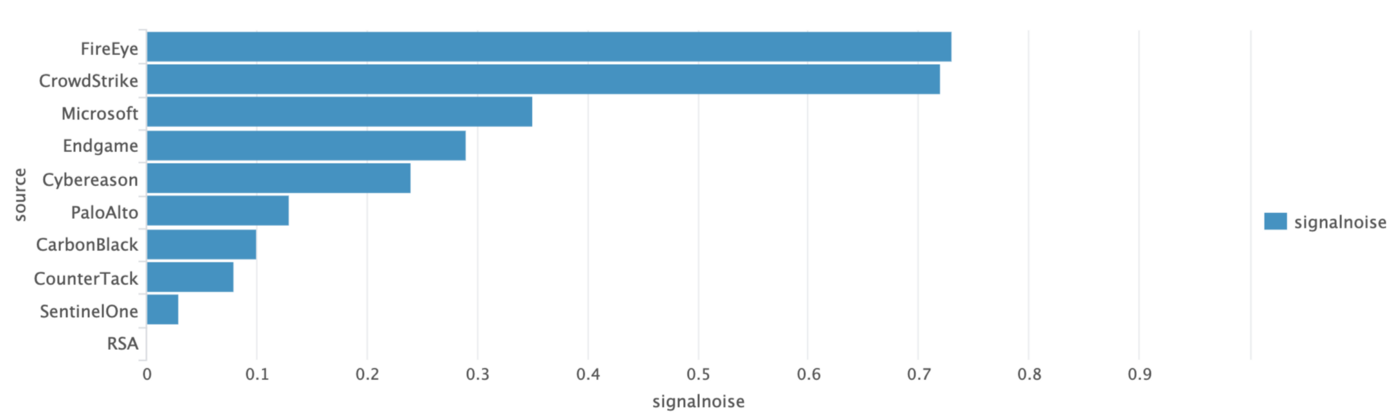

Which EDR has the best signal/noise ratio?

If you look for EDR solutions based on signal/noise ratio, this chart ranks vendors based on General or Specific Behaviour divided by the amount of Telemetry,Enrichment or IOC results:

Draw your own conclusions

There are endless possibilities to slice and dice the EDR evaluation results. Drop me a line with how and why if you did.

- Download Splunk for free

- Download and install the EDR evals app from Splunkbase